AI's Elephant In The Room

There are two stories being told about AI right now.

On one hand, there’s the likely more familiar story. It’s often in, behind, or around the headlines. It’s being told breathlessly and constantly. Across industries, across the political spectrum, on every major news network, in company meetings. It says that AI is both imminently and, somehow, currently, unnervingly capable. Half unbridled exuberance, half white knuckling anxiety.

The past several weeks have demonstrated the alluring hold this first story has. An open source AI agent framework goes viral, ships with glaring security issues, buys a guy a Hyundai, and gets its creator hired by OpenAI and its AI agent social network spinoff acquired by Meta. A karaoke machine company and a well written sci-fi dystopia compete to see which can elicit a bigger jump scare from the stock market. The exuberance and the anxiety feed off each other.

There are some legitimate reasons this story persists. The St. Louis Fed found that over half of U.S. adults now use generative AI in some capacity, outpacing both PC and internet adoption at the same stage after their first mass market products. In the software industry, AI coding assistants have been a breakout hit, with surveys showing between 72-91% adoption.

But there’s another story also being told. It’s not as loud. It isn’t a secret, it just isn’t as provocative. It’s not typically told by the politicians, the headlines, the CEOs, nor the industry analysts. You’ll hear it when talking to technical folks that regularly use or implement the technology. Their read on things tends to line up with the following:

According to S&P Global, 42% of companies abandoned most of their AI initiatives, up sharply from 17% the prior year. The average organization scrapped 46% of proof-of-concept projects before reaching production.

PwC’s Global CEO Survey found that 56% of CEOs report neither revenue increase nor cost decrease from AI over the prior 12 months. Only 12% report both.

BCG’s AI Radar reported that 60% of CEOs have intentionally slowed AI implementation due to concerns over errors and malfunctions.

In other words, despite high usage and adoption in some areas, implementations are struggling.

The U.S. Census Bureau tells a similar story. AI use in actual production rose from 3.7% in September 2023 to roughly 10% by September 2025. When the Bureau was later prodded to broaden its criteria to include any business function, the number jumped to 17.6% (safe to say that this is including even nominal chatbot usage). Over 50% of Americans are using gen AI but less than 20% of businesses are using it in actual production.

No matter how you look at it, there’s a large gap between both the narratives and numbers of these two stories. But both stories do agree on one point. The capabilities are indeed real.

So if capability seemingly isn’t the problem, then what is?

The Capability-Reliability Gap

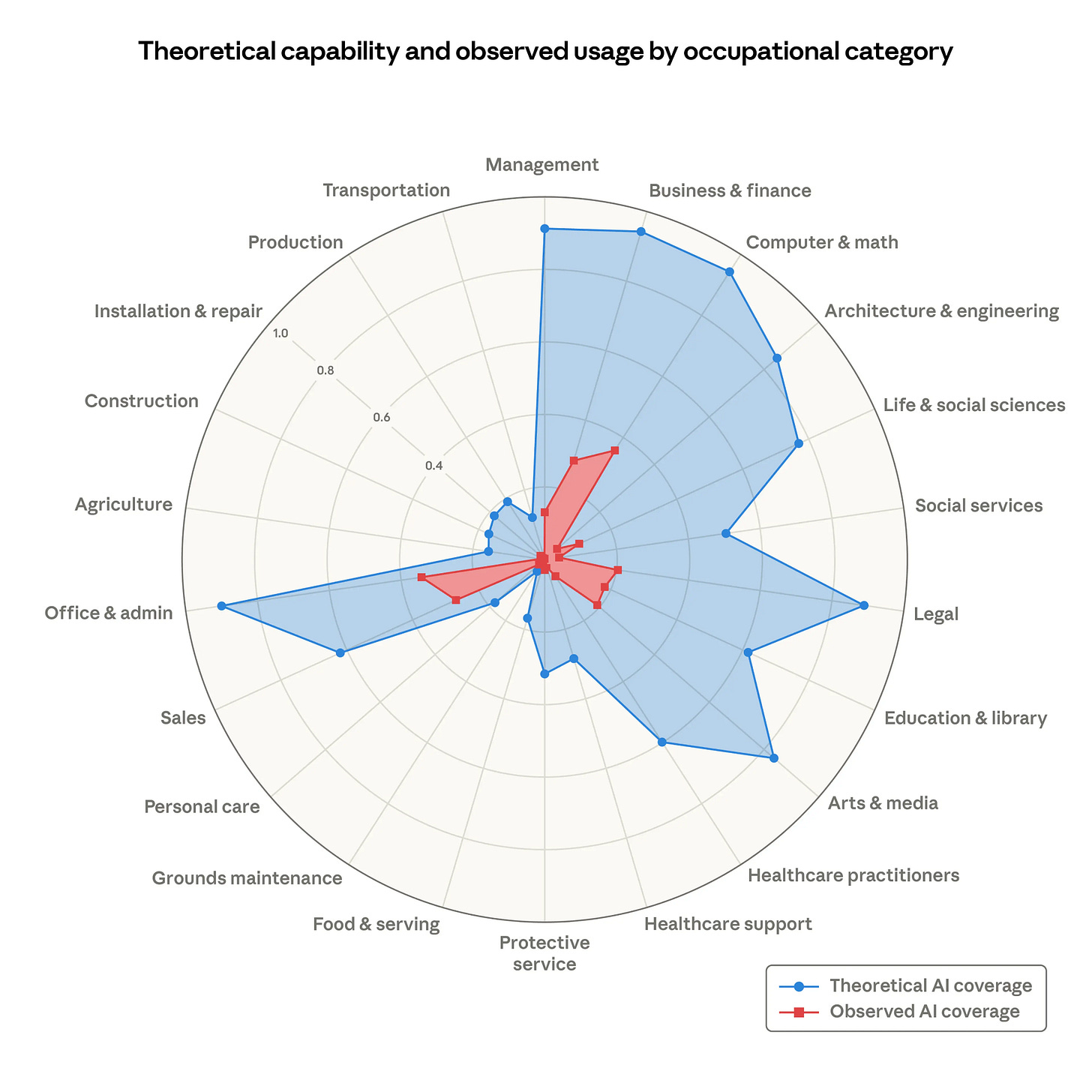

Anthropic put out an article a couple weeks ago introducing a measure that looks at theoretical AI capability vs. observed real world usage across a variety of jobs.

This chart of theirs shows the massive gap between theoretical capabilities and actual usage. Even arguably the best fit job categories (i.e. “Computer & math” occupations) are supposedly only at 33% coverage. But the paper frames all of that gap as a diffusion timing problem.

As capabilities advance, adoption spreads, and deployment deepens, the red area will grow to cover the blue.

It’s a teleological assumption. It’s presented as inevitable.

The article pays marginal lip service to asking why the gap exists, but no serious interrogation. This “capability-first” framing is deeply embedded in the AI industry and shows up everywhere.

A draft paper published last month puts some rigorous measurement behind what many of us have been feeling and what I believe helps explain that coverage gap.

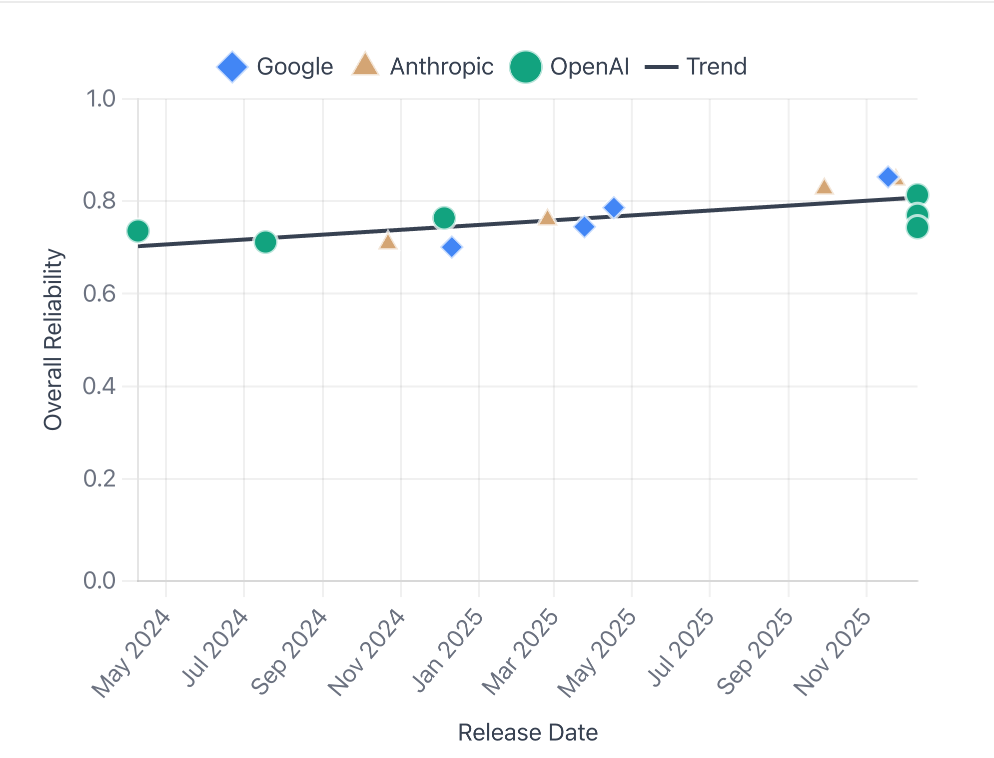

“Towards a Science of AI Agent Reliability”, from Stephan Rabanser, Sayash Kapoor, and Arvind Narayanan, evaluated 14 models from OpenAI, Google, and Anthropic across 18 months of releases. Their core finding was that nearly two years of rapid capability progress produced only modest reliability gains.

All three major providers cluster together on this. It’s an industry wide pattern.

It often feels like the AI industry is continually glossing over the fact that capability and reliability are fundamentally different qualities. We tend to use “accurate” and “reliable” interchangeably, but they describe different things. A model can ace a benchmark (capability/accuracy) and still be a liability in production (reliability). The authors put it well:

When we consider a coworker to be reliable, we don’t just mean that they get things right most of the time. We mean something richer: they get it right consistently, not right today and wrong tomorrow on the same thing. They don’t fall apart when conditions aren’t perfect. They tell you when they’re unsure rather than confidently guessing. When they do mess up, their mistakes are more likely to be fixable than catastrophic.

That “richer” meaning is what the paper breaks down into four dimensions.

Consistency: Agents that can solve a task often fail on repeated attempts under identical conditions. Many models have trouble giving a consistent answer, with outcome consistency scores ranging from 30% to 75% across the board.

Robustness: Most models handle genuine technical failures (server crashes, API timeouts) gracefully. But if we rephrase the instructions with the same semantic meaning, performance drops substantially.

Predictability: Agents are not good at knowing when they’re wrong. This is the weakest dimension across the board. When agents report confidence, it often carries little signal. On one benchmark, most models couldn’t distinguish their correct predictions from incorrect ones better than chance.

Safety: Recent models are noticeably better at avoiding constraint violations, though financial errors, such as incorrect charges, remain a common failure mode. We use safety narrowly to mean bounded harm when failures occur, not broader concerns like alignment. We are still iterating on how we measure safety, so we report it separately from the aggregate reliability score.

So far, scaling up to bigger models has not guaranteed improvements across all these dimensions. Calibration and robustness may see improvements but then consistency takes a hit. More capability often leads to more behavioral range, more ways to do the same thing differently each time. But the standard evaluation practice is to run a benchmark once, report a number, and move on.

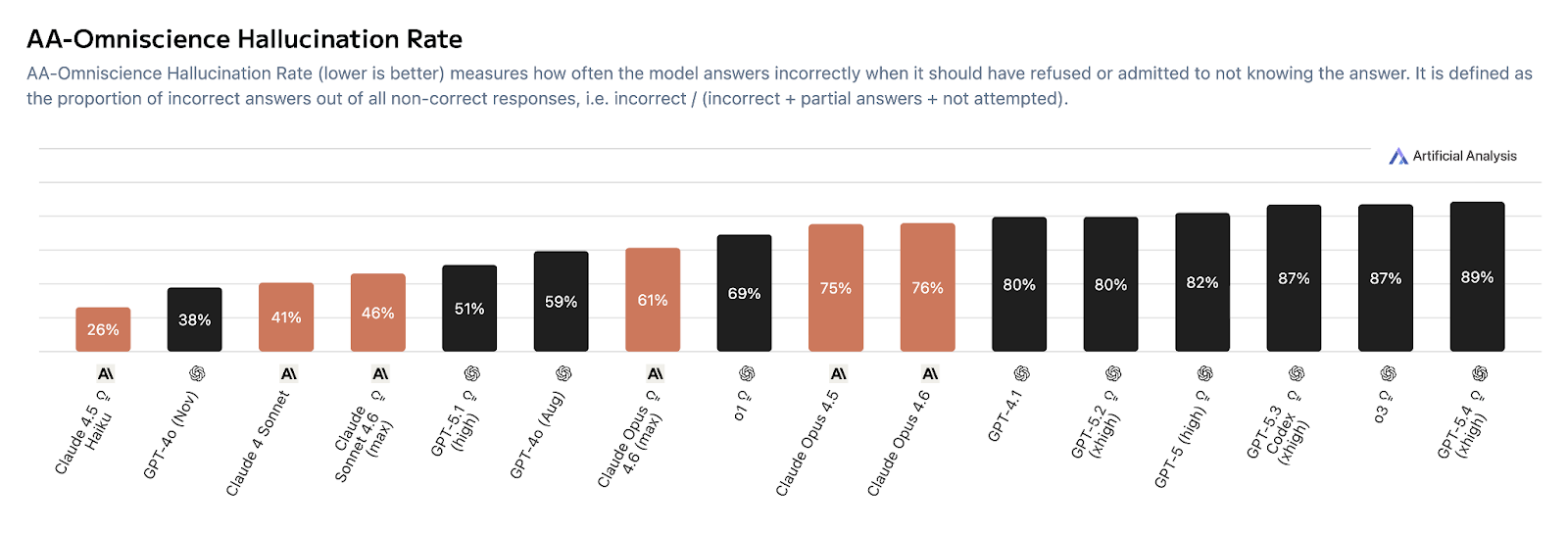

OpenAI’s latest and greatest model released March 5 2026, GPT-5.4, scores well on capability benchmarks while simultaneously showing notably high hallucination rates on tests measuring factual recall. And it’s not just OpenAI. The chart below shows a consistent pattern across both OpenAI and Anthropic: as models get more capable, their hallucination rates tend to go up, not down.

Even when industry evaluations acknowledge consistency, the discourse at large tends to downplay it.

The oft-cited METR evaluations show increasing capabilities of LLMs to complete complex and lengthy software tasks. This is too often stated in ways like, “LLMs can now do 10 hours of work successfully.” Rather than, “LLMs can now do 10 hours of software bound work successfully 50% of the time. And 1 hour of software bound work successfully 80% of the time.” Put another way, imagine a colleague who hands you an hour’s worth of work five times a day. Four of those are fine. The fifth is complete slop, and you won’t know which one until you check. Now imagine that same colleague takes on a full day’s project. It’s a coin flip whether what they hand you is fantastic or unusable. Bigger wins, bigger headaches.

So the models are capable. They’re just not comparably reliable. If the AI industry doesn’t develop proper practices that meaningfully address the varying dimensions of reliability, then that red area from Anthropic’s paper, representing actual usage, might not grow smoothly at all. It might stall or grow in uneven ways. It might require reliability scaffolding as a prerequisite. If reliability is the actual bottleneck, then what kind of problem is it?

Reliability Is a Systems Problem

All signs point to reliability not being a property you can scale into existence the same way you can scale raw capability. Rather, it requires intentional design and engineering. It’s a systems problem. It requires architectural decisions, constraints, system design, evaluation, and deep contextual knowledge about the problem you’re trying to solve.

Pluribus, a system built by Carnegie Mellon University and Facebook in 2019, beat elite professional poker players. For years, the broader poker AI field had been throwing scale and deep learning at the problem without cracking multiplayer play. The system that actually beat the pros cost about $144 in cloud compute and ran on a single server. It was a compound system consisting of self-play algorithms, game abstraction layers, and a realtime search engine. Multiple components with distinct roles, working together.

Cicero, a Diplomacy-playing bot that Meta built in 2022 operated on a similar principle. A language model for communication, a future simulator for strategy, a planning engine, all orchestrated together. And in the research paper, the authors mention that they set it up so it wouldn’t lie. Not because of alignment fears (they weren’t afraid it was going to blackmail anyone), but because it would’ve been unfair to other players otherwise.

Computer scientist Cal Newport explained in a recent interview that what made this possible was the architecture:

There’s no amorphous neural network... it’s six or seven components. We know what they do. [...] They wrote that simulator. It’s not a neural network. They wrote it and they just coded it to say, don’t look at possibilities where you lie. So it doesn’t. So that machine can’t lie. There’s no out of control piece to this.

Newport may be oversimplifying a bit there. Cicero’s honesty wasn’t a hard coded rule exactly. The team trained the dialogue model on a curated subset of human games where players weren’t lying, and architecturally constrained the system so that what Cicero says stays tethered to what it actually plans to do. Neural networks were very much involved. But his broader point stands. Cicero is a specialized composed system rather than a single monolithic model doing everything. And because of that compositional architecture, the team could make a design decision about honesty and then actually enforce it.

What made these systems work was human judgment about architecture, constraints, and composition. The model was a component in a broader system.

None of this is radical or novel. This is just how functional software systems have always been built. The monolithic model narrative implicitly asks us to believe that everything we know about how working technology gets developed, deployed, and maintained simply stops applying. That’s the extraordinary claim. Not the idea that systems should be made up of specialized, legible, constrained components.

And the industry intuitively knows this. The meteoric rise of OpenClaw didn’t come from showcasing some breakthrough in raw model capability. It captured imaginations because it gave people the feeling of owning a composable system they could shape. The fully managed frontier models and tooling paradigm structurally can’t offer that. You can use the same proprietary models under the hood, but owning the orchestration layer reintroduces the optionality that full dependence on a provider’s ecosystem can’t provide. And OpenClaw achieved this cultural moment even as it shipped with hundreds of security vulnerabilities and the reliability profile of a hobbyist’s proof-of-concept. Yes, it’s likely partially a fad, and we’ve seen prior waves of AI agent viral moments come and go (AutoGPT, BabyAGI, Manus, rabbit R1s “Large Action Model”, and others). But the gap between what people expect from these technologies and what they can actually deliver is probably closer than it’s ever been, while still being much larger than most enthusiasts want to accept. That narrowing gap has produced a renewed and profoundly larger base of interest in sincerely pursuing composable, orchestrated systems. Even if OpenClaw itself isn’t the answer, the nerve it struck is telling.

I also see this in my work. I build production voice AI systems for businesses. Something as common as a scheduling voice agent, perhaps for a medical clinic, still requires significant engineering and experimentation. There is no tried and true way yet to build and deploy that where a clinic can just turn it on, mostly stop thinking about it, and trust it to function without any issues. Off the shelf solutions still require a considerable amount of customization (not just because of AI agent unreliability, but also because of all the challenges typical to IT integration). Almost nobody talks about this because the dissonance between that reality and the dominant narratives is too uncomfortable to reconcile. These narratives are buoyed by persistent myths like the notion of “effortless AI”, which I’ve written about before.

We’re simultaneously told these models are so powerful they’ll replace entire categories of jobs, and yet reliably automating a phone call to schedule an appointment requires a nontrivial amount of system design and engineering (not to mention ongoing operation and maintenance).

The AI Agent Reliability paper’s recommendations for deployers are pretty straightforward . They stress the importance of clearly distinguishing automation from augmentation. If a staff member reviewing medical appointments scheduled during off-hours via your voice agent catches an error, it may be a bit annoying for them to call that person back later. But a fully autonomous AI agent that books an erroneous appointment causing a patient to miss a doctor’s appointment is unacceptable.

The paper also recommends building internal evaluations tailored to the specific context and considering reliability thresholds before moving from sandbox to production, “the way aviation requires certification before service.”

That evaluation work itself can be a competitive advantage. It’s the type of institutional knowledge you can only build up by experimenting with your actual data. Generic benchmarks won’t tell you how a model will behave in your environment, with your data, or under your constraints. Customized evaluations are a necessity, and the work of building them is deeply contextual. It resists commodification.

You don’t have to take my word on any of this. NVIDIA, the company that has arguably benefited the most from the AI scaling gold rush, itself published a paper in September 2025 literally titled “Small Language Models are the Future of Agentic AI”. The paper argues that for the repetitive, specialized tasks that AI agents actually perform in practice, smaller models are more suitable and more economical, and that the future is heterogeneous systems where small specialized models handle the bulk of the work and large models get invoked selectively. For the company selling the massively expensive shovels, that’s a notable thing to put in writing. NVIDIA also has its own growing family of open source smaller models built for exactly this kind of modular use, from language models (Nemotron) to speech-to-text models (Parakeet). There’s also NVIDIA’s Groq “acquisition”. NVIDIA spent roughly $20 billion acquiring rights to a company’s technology whose entire selling point is custom AI chips built around low latency, low cost inference. That bet lines up with the view they laid out in the SLM paper. If the future is heterogeneous agentic systems running lots of smaller specialized models, you want inference infrastructure optimized for that, not just bigger clusters running bigger models.

Optionality and Agency

In addition to making reliability tractable, composed systems also preserve something else that the dominant paradigm structurally forecloses on: optionality.

Organizations working within composed systems can keep their hands on all the various knobs. At each boundary and breakpoint, they get to make decisions about what to build, what to outsource, what to control tightly, and where to accept tradeoffs. Not every component will be locally owned or fully controlled. Sometimes a frontier model is absolutely the right tool for a specific part of the system or workflow. But the wider architecture itself preserves the agency to decide. Maybe one part of your system requires more external capabilities than you’d prefer, but you’re able to balance that with tighter control in other parts. More responsibility comes with that, yes. More upfront and maintenance labor too. But it also means your institutional knowledge, your domain expertise, your proprietary data, all of it becomes woven into whatever your competitive edge is, rather than remaining an inert input to a commodity model that everyone else also has access to.

The monolithic frontier path collapses this. It concentrates dependency, homogenizes capability, and often leaves organizations waiting for someone else’s next model release to solve their problems.

The incentives for composed systems aren’t purely technical. Compliance and privacy requirements, liability and risk considerations1, competitive differentiation, the basic economics around generating tokens from expensive frontier models. Everything about the current landscape points toward compound, specialized AI systems becoming the norm. The model as one component in a broader system, not the whole product.

The Question That Follows

Which raises a final question.

Hundreds of billions of dollars are being poured into hyperscale data centers right now. Communities are being asked to accept higher electricity prices, pollution, strained resources. All of it premised on the assumption that the future belongs to ever larger general purpose models and that there will be an insatiable demand for them.

But if the actual path to ubiquitous, reliable “AI that just works” runs through specialized, composed, contextual systems (systems that are smaller, more diverse, and more amenable to a distributed mosaic of compute architectures and environments) then the assumptions underwriting that investment may be fundamentally misaligned with where the real value is heading. Their role as but one component in a much broader ecosystem would be in stark contrast to the center of gravity the current narratives require them to be.

What happens to all of that if it turns out most businesses, most use cases, most solutions don’t actually need them nearly as much as everyone currently assumes?

Some insurers are already seeking to exclude AI risks from existing policies.

Absolute banger.